Table of Contents

- The Shift to Microservices Architecture

- Strategic Scaling: Horizontal vs. Vertical

- The 8-Step Microservices Development Workflow

- Infrastructure as Code and Orchestration

- Performance Monitoring and Auto-Scaling

- Common Pitfalls in Scaling Microservices

- The Future of Scalable Architecture

- Frequently Asked Questions

The Shift to Microservices Architecture

The evolution of modern enterprise systems has necessitated a move away from rigid, monolithic structures toward more fluid environments. Modern digital transformation services rely heavily on the ability to pivot and scale without re-engineering the entire ecosystem from scratch. By decoupling services, organizations ensure that a surge in traffic on a specific feature, like a payment gateway, does not compromise the stability of the entire platform. This modularity is the cornerstone of bespoke software development, allowing teams to deploy updates to individual components in total isolation.

Problem: Monolithic architectures create single points of failure where a bug in one module can bring down the entire application.

Solution: Microservices isolate faults to specific containers, ensuring that the rest of the system remains operational and highly available.

Strategic Scaling: Horizontal vs. Vertical

Understanding how to distribute load is vital for any software development company uk. Vertical scaling, or “scaling up,” involves adding more power to an existing server, which is often a quick fix for early-stage growth but eventually hits a hardware ceiling. In contrast, horizontal scaling, or “scaling out,” adds more instances of a service to share the workload, providing virtually infinite growth potential. Most high-performing digital systems utilize a hybrid approach, starting with optimized resource allocation before transitioning into a fully distributed horizontal model to manage global traffic spikes.

Horizontal Scaling Strategy

A robust scaling out strategy focuses on redundancy and load distribution across multiple geographical regions.

- Speciality: Infinite scalability and high availability.

- Release Date: Deployment Phase 2.

- Key Features: Load balancing, health checks, and service discovery.

Vertical Scaling Strategy

Increasing the resources of a single node is effective for data-heavy tasks that require significant localized processing power.

- Speciality: Performance optimization for single-tenant loads.

- Release Date: Initial Launch Phase.

- Key Features: CPU/RAM upgrades, reduced network latency, and simplified management.

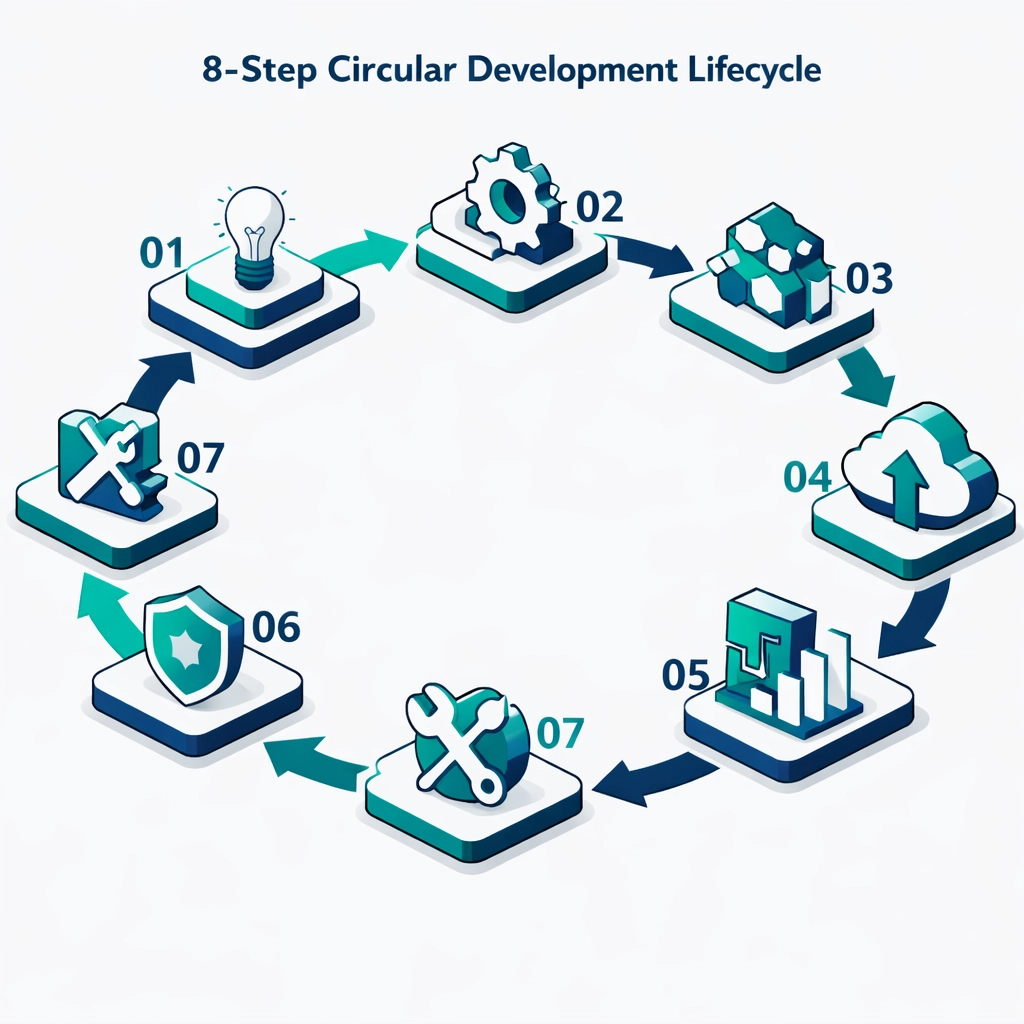

The 8-Step Microservices Development Workflow

Building a scalable environment requires a disciplined approach to the development lifecycle to ensure every service is production-ready. At Chimpare, our bespoke software development process integrates rigorous testing and automated deployment pipelines to maintain high velocity. This workflow ensures that each microservice is stateless, independently deployable, and fully integrated into the wider ecosystem. Following a structured path prevents technical debt and ensures that the architecture remains manageable as the service count grows from dozens to hundreds.

1. Discovery & Strategy

The process begins with mapping business requirements to technical capabilities to define the service boundaries.

- Speciality: Architecture blue-printing.

- Key Features: Domain-driven design and stakeholder alignment.

2. API Design & Specification

Defining how services communicate is critical for maintaining consistency across a distributed system.

- Speciality: Contract-first development.

- Key Features: RESTful APIs, GraphQL, and documentation.

3. Containerization

Packaging code with its dependencies ensures consistent behavior across development, staging, and production environments.

- Speciality: Environment parity.

- Key Features: Docker, Alpine Linux, and multi-stage builds.

4. Continuous Integration (CI)

Automated build and test cycles catch regressions early, ensuring that only high-quality code reaches the repository.

- Speciality: Code quality assurance.

- Key Features: Unit testing, linting, and security scanning.

5. Orchestration Setup

Deploying containers requires a robust management layer to handle networking, storage, and scaling automatically.

- Speciality: Infrastructure management.

- Key Features: Kubernetes, Helm charts, and ingress controllers.

6. Automated Testing

Beyond unit tests, integration and end-to-end tests verify that services interact correctly under various conditions.

- Speciality: System reliability.

- Key Features: Selenium, Postman collections, and mock services.

7. Deployment & Canary Releases

Gradually rolling out updates to a small subset of users minimizes the impact of potential deployment errors.

- Speciality: Risk mitigation.

- Key Features: Blue-green deployments and feature flags.

8. Iterative Monitoring

Post-deployment analysis provides the data needed to refine the service and plan for the next development cycle.

- Speciality: Continuous improvement.

- Key Features: User feedback loops and performance auditing.

Infrastructure as Code and Orchestration

Managing a complex microservices grid manually is an impossible task for any modern IT department. Infrastructure as Code (IaC) allows developers to define server configurations and network topologies using version-controlled scripts, ensuring repeatability. Tools like Terraform and Ansible work alongside orchestration platforms like Kubernetes to manage the lifecycle of thousands of containers simultaneously. This level of automation is essential for digital transformation services that require rapid scaling and self-healing capabilities when hardware failures occur.

Problem: Manual configuration of cloud resources leads to “environment drift” and inconsistent performance across different regions.

Solution: Infrastructure as Code ensures every environment is an exact replica of the master configuration, eliminating manual errors and deployment silos.

Performance Monitoring and Auto-Scaling

Data-driven decision-making is the heartbeat of a successful microservices strategy, requiring real-time visibility into every node. Utilizing tools like Prometheus and Grafana allows engineers to visualize system health and set automated triggers for resource allocation. When traffic exceeds a predefined threshold, the system automatically spins up new instances to handle the load, scaling back down when demand subsides. This elastic approach optimizes cloud costs while ensuring that the end-user experience remains lightning-fast regardless of global traffic conditions.

Key Performance Metrics

Monitoring the right data points is essential for identifying bottlenecks before they impact the user experience.

- Throughput: Measuring the number of requests processed per second.

- Error Rate: Tracking the percentage of failed requests to identify unstable services.

- Latency: Monitoring the time taken for a round-trip request between services.

- Resource Saturation: Checking CPU and memory usage to trigger auto-scaling events.

Common Pitfalls in Scaling Microservices

While microservices offer immense power, they also introduce complexities that can derail a project if not managed correctly. One of the most common mistakes is “distributed monolith” design, where services are so tightly coupled that they cannot scale independently. Another frequent issue is neglecting the importance of centralized logging, making it nearly impossible to trace errors across multiple service boundaries. A professional software development company uk prioritizes observability and loose coupling to avoid these architectural traps.

Avoid These Errors:

- Over-Engineering Early: Don’t break a simple application into 50 microservices before you have the traffic to justify the overhead.

- Ignoring Network Latency: Every internal call between services adds time; optimize your communication protocols to minimize lag.

- Lack of Security Standards: Ensure every service follows a unified authentication and authorization protocol like OAuth2.

- Manual Scaling: If you are still manually adding servers during a traffic spike, you haven’t fully embraced digital transformation.

The Future of Scalable Architecture

As we look toward the next decade, the integration of AI-driven auto-scaling and serverless computing will further simplify the management of microservices. Businesses that embrace these cutting-edge technologies today will be better positioned to handle the data-heavy demands of the future. The goal is a truly autonomous infrastructure that predicts traffic patterns and adjusts its own footprint in real-time. For any organization pursuing digital transformation services, staying ahead of these trends is the key to maintaining a competitive advantage in an increasingly digital world.

Frequently Asked Questions

What is the best language for microservices?

There is no single “best” language, as microservices allow for a polyglot approach where you use the best tool for each task. Often, Node.js is used for high-concurrency APIs, while Python is preferred for data processing.

How do microservices improve SEO and user experience?

By allowing individual components to load and scale independently, microservices contribute to faster PageSpeed scores and higher reliability. Improved performance is a core ranking factor for search engines and directly correlates with higher user retention.

What is the cost of moving to a microservices architecture?

The initial investment in bespoke software development is higher than a monolith due to infrastructure setup. However, the long-term savings in maintenance, scaling efficiency, and reduced downtime provide a much higher ROI.

Is Kubernetes necessary for microservices?

While not strictly necessary for very small projects, Kubernetes has become the industry standard for managing containerized applications at scale. It provides the essential automation needed for self-healing and load balancing.

How do I handle data consistency across services?

Consistency is typically managed through eventual consistency models and event-driven architectures using message brokers like RabbitMQ or Kafka. This ensures that data remains synchronized across the system without creating performance bottlenecks.